So as an intern in a big company I was given the task to get comfortable with docker. The problem is that docker is quite fresh so there isn’t really that much of good tutorials out there. After reading a bunch of articles and sparse tutorials (even taken the official tutorial at https://www.docker.com/tryit), I still straggled to get a firm grip on what docker even is supposed to be used for. Therefore I decided to make this tutorial for a total beginner like me.

Docker vs VirtualBox

There are many different explanations on the internet about what docker is and when to use it. Most of them however tend to complicate things more than giving some practical information for a total beginner.

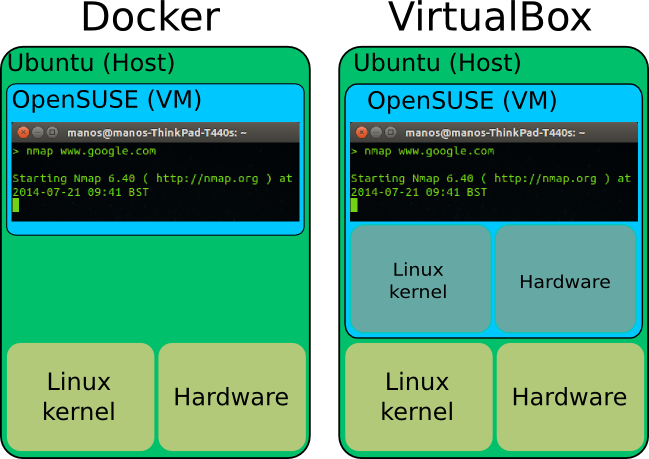

Docker simply put is a replacement for virtual machines. I will use virtual box as a comparison example since it’s very easy for anyone to download and try to see the differences themselves.

The application VirtualBox is essentially a virtual machine manager.

Each and every of the OSes you see in the picture above, is an installed virtual machines. Each such machine has it’s own installed OS, kernel, virtual devices like hard disks, network cards etc. All this takes a considerate amount of memory and needs extra processing power. All virtual machines (VM) like VMware, Parallels, behave the same.

Now imagine that we want to use nmap from an OpenSUSE machine but we are on an Ubuntu. Using VirtualBox we would have to install the whole OS and then run it as a virtual machine. The memory consumption is humongous for such a trivial task.

In contrast to VirtualBox or any other virtual machine, Docker doesn’t install the whole OS. Instead it uses the kernel and hardware of our primary computer (the host). This makes Docker to virtualize super fast and consume only a fraction of the memory we would else need. See the benefits? Imagine if we wanted to run 4 different programs on 4 different OSes. That would take at a minimum 2GB of RAM.

But why would you want to run nmap on openSUSE instead of the host computer? Well this was just a silly example. There are other examples that prove the importance of a tool like Docker. Imagine that you’re a developer and you want to test your program on 10 different distributions for example. Or maybe you are the server administrator on a company and just updated your web server but the update broke something. No problem, you can run your web server virtualized on the older system version. Or maybe you want to run a web service in a quarantine for security reasons. As you see there are loads of different uses.

One question might rise though: how do we separate each “virtual machine” from the rest of the stuff on our computer? Docker solves this with different kernel (and non-kernel) mechanisms. We don’t have to bother about them though, since Docker takes hands of everything for us. That’s the beauty of it afterall: simplicity.

Install docker

Docker is in the ubuntu repositories (Ubuntu 14.04 here) so it’s as straightforward as:

sudo apt-get install docker

Once installed, a daemon (service) of the docker will be running. You can check that with

sudo service docker.io status

The daemon is called docker.io as you might have noticed. The client that we willuse is simply called docker. Pay attention to this tiny but significant detail.

Configuration

Do these two things before using docker to avoid any annoying warnings and problems.

Firstly we need to add ourselves to the docker group. This will let us to use docker without having to use sudo every time:

sudo adduser <your username here> docker

Log out and then in.

Secondly we will edit the daemon configuration to ensure that it doesn’t use any local DNS servers (like 127.0.0.1). Use your favourite editor to edit the /etc/default/docker.io file. Uncomment the line with DOCKER_OPTS. The result file looks like this for me:

# Docker Upstart and SysVinit configuration file # Customize location of Docker binary (especially for development testing). #DOCKER="/usr/local/bin/docker" # Use DOCKER_OPTS to modify the daemon startup options. DOCKER_OPTS="-dns 8.8.8.8 -dns 8.8.4.4" # If you need Docker to use an HTTP proxy, it can also be specified here. #export http_proxy="http://127.0.0.1:3128/" # This is also a handy place to tweak where Docker's temporary files go. #export TMPDIR="/mnt/bigdrive/docker-tmp"

We need to restart the daemon for the change to take effect:

sudo service docker.io restart

Get an image to start with

In our scenario we want to virtualize an Arch machine. On VirtualBox, we would download the Arch .iso file and go through the installation process. In Docker we download a “fixed” image from a central server. There are thousands of different such image files. You can even upload your own image as you will see later.

> docker pull base/arch Pulling repository base/arch a64697d71089: Download complete 511136ea3c5a: Download complete 4bbfef585917: Download complete

This will download a default image for Arch Linux. “base/arch” is the identifier for the Arch Linux image.

To see a list of all the images locally stored on your computer type

> docker images REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE base/arch 2014.04.01 a64697d71089 12 weeks ago 277.1 MB base/arch latest a64697d71089 12 weeks ago 277.1 MB

Starting processes with docker

Once we have an image, we can start doing things in it as if it was a virtual machine. The most common thing is to run bash in it:

> docker run -i -t base/arch bash [root@8109626c57f5 /]#

See how the command prompt changed? Now we are inside the image (virtual machine) running a bash instance. In docker jargon we are actually inside a container. The string 8109626c57f5 is the ID of the container. You don’t need to know much about that now. Just pay attention to how we acquired that ID, you will need it.

Let’s do some changes. Firstly I want to install nmap. Since pacman is the default package manager in Arch, I will use that:

[root@8109626c57f5 /]# pacman -S nmap resolving dependencies... looking for inter-conflicts... ..

Let’s run nmap to see if it works:

> nmap www.google.com Starting Nmap 6.46 ( http://nmap.org ) at 2014-07-18 13:33 UTC Nmap scan report for www.google.com (173.194.34.116) Host is up (0.00097s latency). ..

It seems we installed it successfully! Let’s also create a file:

[root@8109626c57f5 /]# touch TESTFILE

So now we have installed nmap and created a file in this image. Let’s exit the bash

[root@8109626c57f5 /]# exit exit >

In VirtualBox you can save the state of the virtual machine at any time and load it later. The same is possible with docker. For this I will need the ID of the container that I was using. In our case that is 8109626c57f5 (it was written in the terminal prompt all the time). In case you don’t remember the ID or you have many different containers, you can list all the containers:

> docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS 8109626c57f5 base/arch:2014.04.01 bash 25 minutes ago Exit 0

Let’s save the current state to a new image called mynewimage:

> docker commit -m "Installed nmap and created a file" 8109626c57f5 mynewimage 6bf56047833bd41c43c9fc3073424f37bfbc96993b65b868cb8d6a336ac28b0b

Now we have the saved image locally on our computer. We can load it anytime we want to come back to this state. And the demonstration..

> docker run -i -t mynewimage bash [root@55c343f1643a /]# ls TESTFILE bin boot dev etc home lib lib64 mnt opt proc root run sbin srv sys tmp usr var [root@55c343f1643a /]# whereis nmap nmap: /usr/bin/nmap /usr/share/nmap /usr/share/man/man1/nmap.1.gz

Loading the image on an other computer

We now have two images, the initial Arch image we started with and the new image that we saved:

> docker images REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE mynewimage latest 6bf56047833b 2 hours ago 305.8 MB base/arch 2014.04.01 a64697d71089 12 weeks ago 277.1 MB base/arch latest a64697d71089 12 weeks ago 277.1 MB

It’s time to load the new image on a totally different computer. First I need to save the image on a server though. Luckily for a docker user, this is very simple. First you need to make an account at https://hub.docker.com/

Once that is done we need to upload the image to the hub. However we have to save the image in a specific format, namely username/whatever.

Let's save the image following that rule: > docker commit -m "Installed nmap and created a file" 8109626c57f5 pithikos/mynewimage 12079e0719ce517ec7687b4bf225381b99b880510cda3bc1e587ba1da067bd3b

First we need to login to the server:

> docker login Username (pithikos): Login Succeeded

Then I upload the image to the server:

> docker push pithikos/mynewimage The push refers to a repository [pithikos/mynewimage] (len: 1) Sending image list ..

Once everything is uploaded, we can pull it from anywhere just as we did when we first pulled the Arch image.

I will do that from inside an OpenSUSE install on a totally different machine. First I try to run nmap

As you see it’s not installed on the computer. Let’s load our Arch image that we installed nmap on

Once the download of the image is complete we will run a bash on it just to look around:

As you see, the TESTFILE is in there and we can run nmap. We are running the same Arch I ran earlier on Ubuntu, on a totally new machine with a totally different OS, but still running it as an Arch.

A bit on containers

Now you probably got a good idea on what images are. Images are simply states of a “virtual machine”.

When we use docker run whatever we are running is put inside a container. A container is pretty much a Linux concept that arose recently with the recent addition of Linux containers to the kernel. In practise container is running a process (or group of processes) in isolation from the rest of the system. This makes the process in the container to not being able to have access to other processes or devices.

Every time we run a process with Docker, we are creating a new container.

> docker run ubuntu ping www.google.com PING www.google.com (64.15.115.20) 56(84) bytes of data. 64 bytes from cache.google.com (64.15.115.20): icmp_seq=1 ttl=49 time=11.4 ms 64 bytes from cache.google.com (64.15.115.20): icmp_seq=2 ttl=49 time=11.3 ms ^C > docker run ubuntu ping www.yahoo.com PING ds-any-fp3-real.wa1.b.yahoo.com (46.228.47.114) 56(84) bytes of data. 64 bytes from ir2.fp.vip.ir2.yahoo.com (46.228.47.114): icmp_seq=1 ttl=45 time=46.5 ms 64 bytes from ir2.fp.vip.ir2.yahoo.com (46.228.47.114): icmp_seq=2 ttl=45 time=46.1 ms ^C

Here I ran two instances of the ping command. First I pinged http://www.google.com and then http://www.yahoo.com. I had to stop them both with CTRL-Z to get back to the terminal.

> docker ps -a | head CONTAINER ID IMAGE COMMAND CREATED STATUS 7c44887b2b1c ubuntu:14.04 ping www.yahoo.com About a minute ago Exit 0 72d1ca1b42c9 ubuntu:14.04 ping www.google.com 7 minutes ago Exit 0

As you see, each command got its own container ID. We can further analyse the two containers with the inspect command. Below I compare the two different ping commands I ran to make it more apparent how the differentiate in the two containers:

> docker inspect 7c4 > yahoo > docker inspect 72d > google > diff yahoo google 2,3c2,3 < "ID": "7c44887b2b1c9f0f7c10ef5e23e2643026c99029fce8f1575816a23e56e0c2d0", < "Created": "2014-07-21T10:05:45.106057381Z", --- > "ID": "72d1ca1b42c995973086a5fb3c5e256d5cb2c5055e8f9040037bb6bb915c8187", > "Created": "2014-07-21T10:00:08.653219667Z", 6c6 < "www.yahoo.com" --- > "www.google.com" 9c9 < "Hostname": "7c44887b2b1c", --- > "Hostname": "72d1ca1b42c9", 29c29 < "www.yahoo.com" --- > "www.google.com" 44,45c44,45 ..

Notice that I don’t have to write the whole string. For example instead of 7c44887b2b1c, I just type the first three letters 7c4. In most cases this will suffice.

February 13, 2017 at 10:20 am

Hi. Great tutorial. Thanks a lot. Thumbs up